Everyone's Building AI Agents. Nobody's Building the Trust Layer.

By Tom Meredith

Here's the thing about AI agents in 2026.

Everyone's building them. The frameworks are commoditizing. The APIs are getting cheaper. The models are getting smarter.

But almost nobody is building the thing that actually matters: the operating discipline that makes AI systems safe to trust.

We run a fleet of AI agents across multiple businesses. Not demos. Not prototypes. Production systems handling real marketing, real content, real customer-facing operations, every day.

And the lesson we keep re-learning... across every agent, every domain, every use case... is that the gap between "deployed" and "trustworthy" is where the real work happens.

Most teams stop right at that gap.

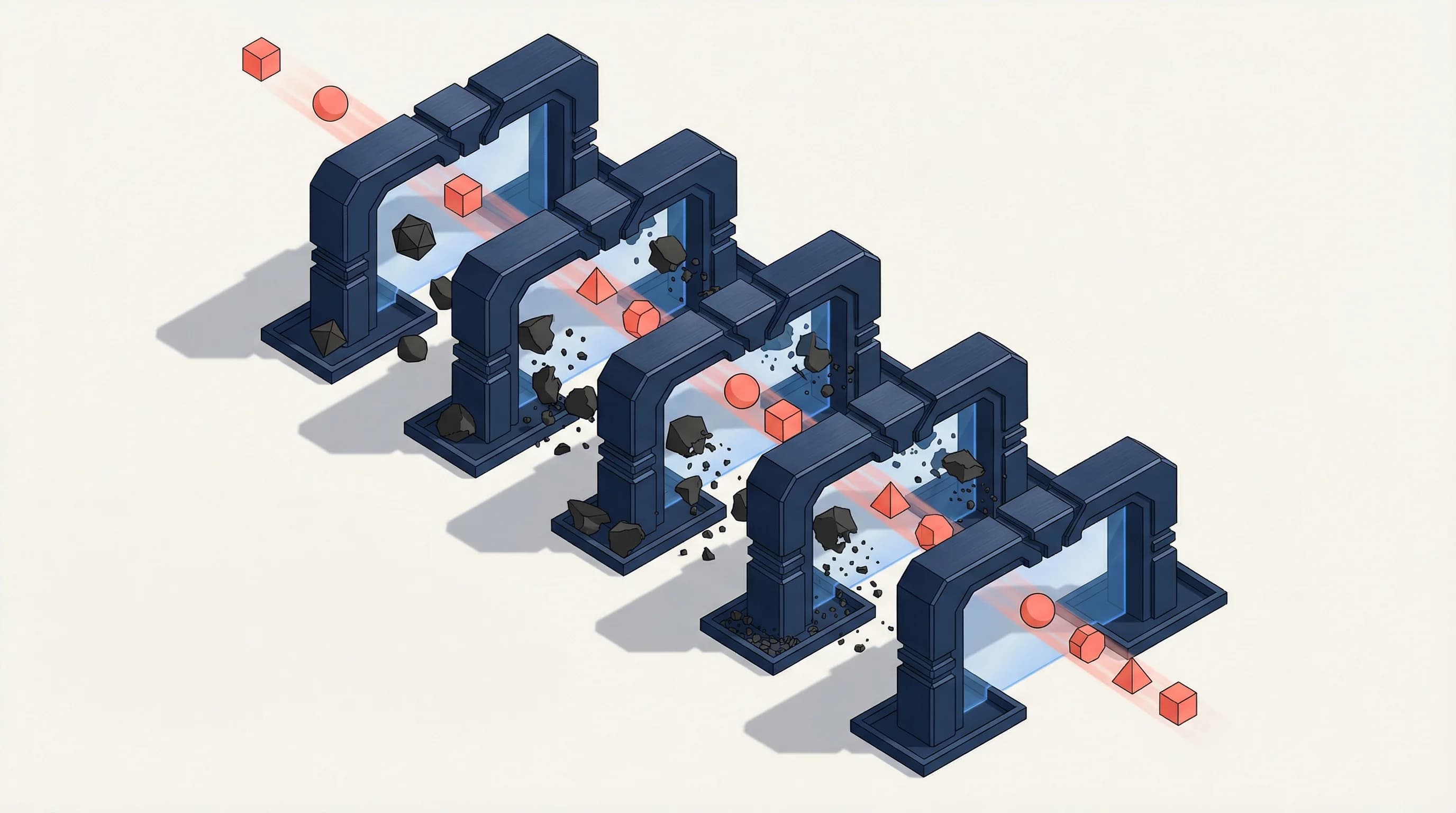

What the Trust Layer Actually Looks Like

It's not a product. It's not a framework. It's an operating discipline.

Here are five things that happened in our operations over a single 24-hour window. Each one illustrates a different component of what we call the trust layer.

1. The 40% Failure Rate Nobody Caught

One of our agents was generating content pages at scale. Everything looked fine from the outside... the pages rendered, the data populated, the URLs resolved.

Then we ran a blast radius analysis.

40% of published pages had corrupted data. 79 out of 198. The FAQ sections had been generated with malformed inputs, and the output looked plausible enough that nobody noticed.

Without a verification gate that checked output integrity (not just output existence), those broken pages would have silently polluted our search presence for weeks.

Trust layer component: Output verification that tests correctness, not just completion.

2. The "Complete" Analytics Setup That Wasn't

We had a fully configured analytics stack. Google Analytics, conversion tracking, event taxonomy... the whole thing.

Then we audited it end-to-end.

Five structural gaps. The contact form captured attribution data into session storage but the component that submitted it... never actually read it back. All that tracking infrastructure was collecting data that went nowhere.

"Configured" looked identical to "working" from every dashboard. Only a live-path test that followed real user behavior through the actual production code caught the disconnect.

Trust layer component: Live-path validation, not just configuration checks.

3. The Hallucinated Address

A freshly launched business hub page had a street address, phone number, and business hours. All wrong. All hallucinated by the generation model with complete confidence.

For a local business, wrong NAP (Name, Address, Phone) data doesn't just look bad... it actively destroys your search signals. Google's local algorithms treat inconsistent contact data as a trust-breaking signal.

Same-day QA verification against source-of-truth databases caught it before any crawler indexed it.

Trust layer component: Source-of-truth verification for every factual claim an AI generates.

4. The Permission Model Nobody Designed

A team was mapping out 15 distinct types of AI operators for an enterprise control plane. The first instinct was to build 15 separate agent systems, each with its own permissions, its own behavioral rules, its own deployment.

The better answer: one authenticated control plane with policy presets. Permission levels from 0 (read-only) to 5 (full autonomy). Draft-first behavior at every level. Approval gates that match the risk, not the role.

The key realization wasn't technical. It was operational: personas should be policy presets, not separate systems. You don't need more agents. You need better permission models.

Trust layer component: Risk-calibrated permission architecture, not role-based access theater.

5. The Broken Creative That Almost Went Live

An agent built ad creatives for a paid campaign. First render pass: broken output. Images didn't load correctly, copy was misaligned, the whole thing was unusable.

Without a "QA before load" rule... that broken creative would have been pushed directly into a live ad campaign. Real money, real impressions, real brand damage.

Trust layer component: Pre-deployment QA gates that prevent broken output from reaching production.

The Pattern

These aren't edge cases. They're not bad luck or immature tooling.

This is what running AI agents in production looks like every single day.

And the pattern is consistent: the gap between "it ran" and "it's right" is where trust gets built or destroyed. Most teams treat deployment as the finish line. The operators who survive treat deployment as the starting line.

Why This Matters for Your Business

If you're evaluating AI agents for your operations... or if you're already running them... here's what most vendors won't tell you:

Building the agent is the easy part.

The hard parts are:

- Permission models that match the actual risk of what the agent can do

- Draft-first behavior where agents propose actions and wait for approval before executing high-stakes operations

- Verification loops that check output quality, not just output existence

- Blast radius analysis before every production change

- Source-of-truth validation for any factual claim an AI generates

- The cultural discipline to treat "deployed" as the midpoint, not the destination

This is the trust layer. It's not glamorous. It doesn't demo well. You can't buy it from a vendor.

But it's the difference between AI agents that work and AI agents your business can actually rely on.

The Market Gap

Right now the AI agent conversation is dominated by two camps.

Camp 1: "Look how cool these agents are!" (Framework comparisons, benchmark wars, demo videos.)

Camp 2: "Here's how to build AI agents in 10 minutes." (Tutorials, templates, starter kits.)

Almost nobody is in Camp 3: "Here's what it takes to trust AI agents in production."

That's the gap. And it's where the actual value lives... because every business that deploys agents will eventually discover that building them was the small problem.

Making them trustworthy is the real work.

At Supertrained, we build AI agent marketing systems for businesses that need more than demos. If you're navigating the gap between "deployed" and "trustworthy," book a conversation and let's talk about what your trust layer should look like.

Have a similar challenge?

Describe your bottleneck and get a free Automation Blueprint in 60 seconds.