AI Automation Agency Operating System: The Founder Playbook

By Tom Meredith

Every YouTube video, every Reddit thread, every "How to Build an AI Automation Agency in 2026" guide tells you the same thing: pick a niche, learn n8n or Make, find clients, deliver automations.

That's the easy part.

The hard part starts week two. When your agent's output drifts and nobody catches it for three days. When a client asks "is this actually working?" and you realize you don't have a number to show them. When you wake up to discover your automation ran 47 times overnight because nobody defined a circuit breaker.

Tools are 10% of the problem. Operating discipline is 90%.

We run a production fleet of five AI agents across three businesses. Every day, they execute real work — content production, SEO monitoring, competitor analysis, prospect research, client deliverables. Not demos. Not proofs of concept. Production.

Here's what we learned about actually operating the thing nobody teaches you how to operate.

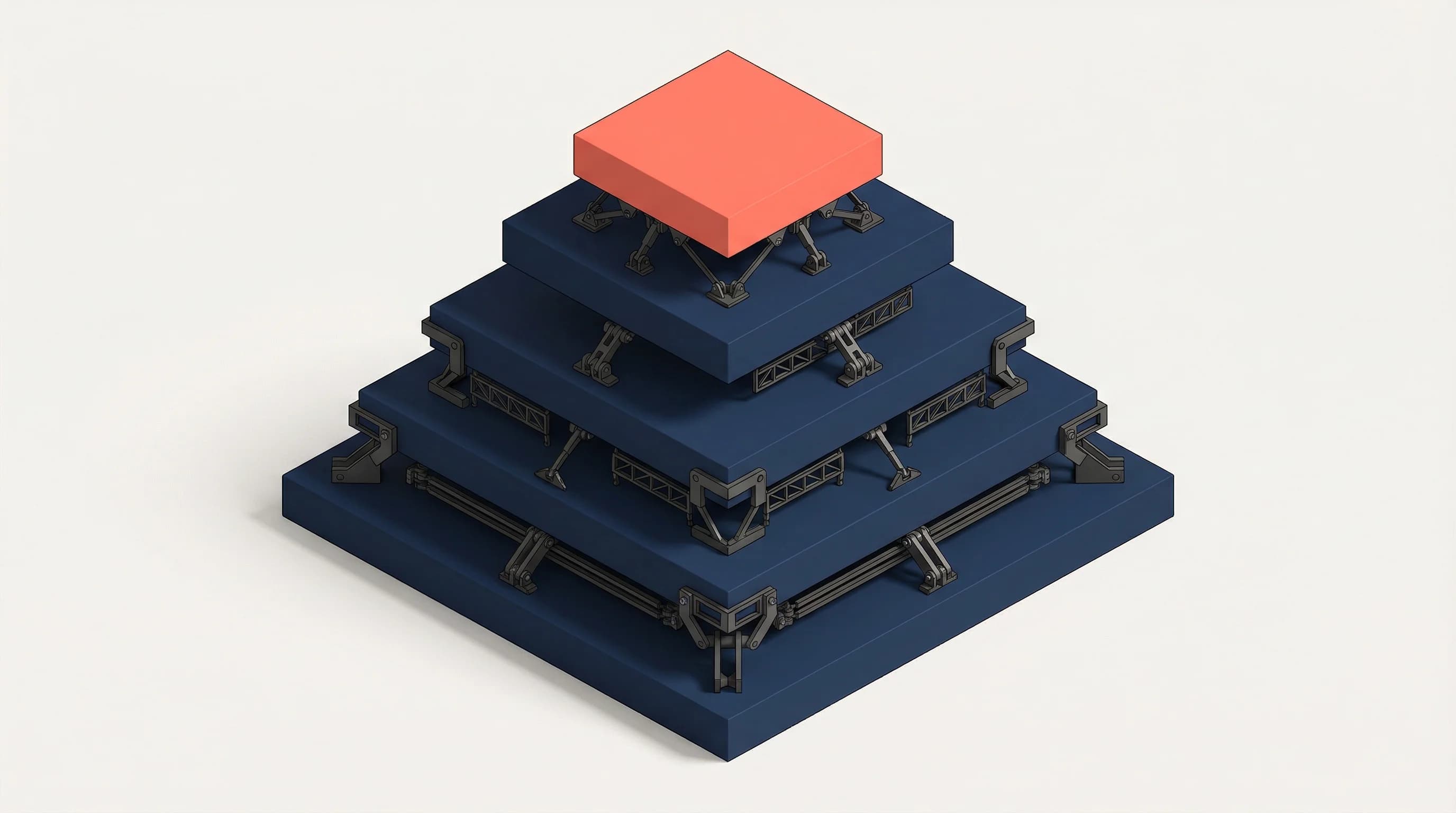

The Five Operating Layers

Every AI automation agency needs the same five layers. Skip one and you'll discover why the hard way.

1. Autonomy Definition

Before an agent touches a single task, you need to answer one question: what can it do alone?

This isn't philosophical. It's a permissions table. For every workflow, define:

- Green lane: Agent executes without asking. File reads, research, drafting, internal analysis.

- Yellow lane: Agent drafts, human reviews before anything goes external. Content publishing, client communications, strategy recommendations.

- Red lane: Agent cannot proceed without explicit approval. Spending money, sending emails as a human, making commitments to prospects.

If you don't define this on day one, you'll spend week two firefighting. We learned this after an agent helpfully sent a prospect email that hadn't been approved. The email was fine. The precedent was not.

The autonomy table isn't about trust — it's about blast radius. An agent that edits a file and gets it wrong costs you five minutes. An agent that sends a client the wrong pricing costs you the relationship.

2. Measurement Cadence

Hope is not a measurement strategy. Neither is "I'll check on it when I have time."

Every piece of work needs a measurement schedule:

- 24 hours: Is it live? Did it deploy correctly? Basic health check.

- 72 hours: Early signal. Engagement, errors, any indication of trajectory.

- 7 days: Real data. Enough signal to know whether something is working or needs adjustment.

- 30 days: Verdict. Ship, scale, or kill.

Without this cadence, you end up in one of two failure modes. Either you obsessively check everything hourly (expensive, anxious, counterproductive) or you forget about it entirely and rediscover it three weeks later when the client asks for an update.

We capture baselines at every checkpoint. Not because the data is always actionable — sometimes a 24-hour baseline tells you nothing. Because the discipline of capturing it forces you to look, and looking is where you catch problems before they become crises.

3. Escalation Architecture

Every system fails. The question isn't whether your agents will produce garbage — they will. The question is: how fast do you catch it?

Build two mechanisms:

Circuit breakers. Hard rules that stop execution when conditions are met. If a task is blocked for 48 hours, escalate automatically. If an agent's output fails quality checks twice in a row, pause and flag. If costs exceed a threshold, stop.

Circuit breakers aren't about distrust. They're about catching drift before it compounds. An agent that's 5% off on Monday is 15% off by Wednesday and producing client-visible garbage by Friday.

Escalation paths. When an agent hits a wall, it needs to know exactly who to talk to and how. Not "ask for help" — that's vague enough to be useless. Instead: "If blocked on pricing decisions, flag Tom. If blocked on implementation, route to Cordelia. If blocked for more than 72 hours, escalate to the coordinator."

The architecture matters more than the agents. A mediocre agent with a good escalation system outperforms a brilliant agent with no safety net.

4. Memory and Continuity

Here's a fact that surprises people: AI agents wake up with no memory. Every session, they start fresh. They don't remember yesterday's conversation, last week's decision, or the client's preference that you painstakingly explained three sessions ago.

This is the single largest operational challenge in running an AI agency, and almost nobody talks about it.

You need three tiers:

Working memory — the hot context an agent needs every single turn. Current priorities, active blockers, what's waiting on whom. Keep it small (under 12,000 characters) or it gets silently truncated, and your agent starts losing information without knowing it.

Retrievable memory — the full body of knowledge the agent can search when a topic comes up. Past decisions, client notes, measurement baselines, pattern libraries. This doesn't load every turn — it surfaces automatically when semantically relevant.

Archived memory — completed projects, historical data, lessons learned. Not actively searched but available when you need to answer "what happened in January?"

The failure mode we see in every new agency: they dump everything into working memory. The agent's context fills up, important details get pushed out, and the agent starts making decisions without information it should have. Managing memory architecture isn't glamorous. It's the difference between an agent that gets smarter every week and one that repeats the same mistakes.

5. Proof and Accountability

If you can't show a client what happened, it didn't happen.

This is where AI agencies either build trust or destroy it. Every workflow should produce:

- Decision logs. What did the agent decide, and why? Not a novel — a one-liner per decision with reasoning.

- Artifact trails. What was produced? Where does it live? What version is current?

- Measurement baselines. Before and after numbers. Even if the "before" is zero.

Weekly operating reports beat monthly invoices. Show the measurement cadence in action: "Here's what we tested this week. Here's what we learned. Here's what we're changing."

The transparency advantage is real and it's underutilized. Most agencies hide behind deliverables and hope clients don't ask too many questions. Agencies that show their work — including the failures — win trust. Agencies that hide behind deliverables compete on price. Pick your lane.

The Unit Economics Nobody Discusses

Token costs are the cheapest part of running an AI agent. For context: a full year of an agent producing knowledge-worker-equivalent output costs roughly $250 in compute.

That number shocks people. It's also misleading in isolation.

The real costs:

- Human review time. Someone has to read, validate, and approve agent output. At scale, this is your largest line item.

- Quality verification. Did the automation actually work? Are the numbers right? Does the content meet the standard?

- Context management. Keeping memory systems current, updating working documents, maintaining continuity between sessions.

- Error recovery. When something breaks — and it will — how much human time does it take to identify, fix, and prevent recurrence?

The ratio that matters: human hours per agent hour. If it's 1:1, you don't have automation. You have a very expensive typewriter.

In our fleet, after three weeks of optimization, we operate at roughly 4:1 — four hours of agent work per one hour of human oversight. That ratio improves as the agents learn patterns and the operating system matures. But it started closer to 1.5:1. Plan accordingly.

Black Box Comfort

Here's the management philosophy that separates operators who scale from operators who burn out: you have to be comfortable not controlling every output.

AI agents are probabilistic systems. They won't produce the exact same output twice. The first draft will be 70% right. The human edit — that 30% — is where your agency value actually lives.

Clients don't want perfection. They want predictable quality within a defined range. Define the range explicitly. "This content will be publication-ready after one human review pass" is a promise you can keep. "This content will be perfect on the first pass" is a promise that will destroy your margins.

The founders who struggle with AI automation are almost always the ones who can't let go of deterministic thinking. They want to know exactly what the agent will say before it says it. That's not how this works. You manage the system, not the individual token. We've written more about this in The AI Skill Nobody Talks About.

What This Looks Like at Scale

Scaling from one agent to five changes everything except the principles.

What changes:

- Memory architecture gets shared. Agents need to know what other agents have learned. A pattern discovered in content production is relevant to prospect research.

- Coordination becomes its own discipline. Who routes work? Who resolves conflicts when two agents need the same resource? Who synthesizes lessons across the fleet?

- Escalation paths multiply. Five agents hitting walls in different domains simultaneously requires a coordinator, not just a founder checking Slack.

What doesn't change:

- Autonomy boundaries still need explicit definition per agent, per task.

- Measurement cadence still runs 24h/72h/7d/30d.

- Proof and accountability still produce artifacts that clients can see.

The compounding effect is real. Each week, the fleet gets better because every agent's lessons feed back into shared patterns. A mistake made once becomes a documented anti-pattern. A tactic that works gets propagated to every relevant workflow.

After three weeks, our fleet operates with institutional knowledge that would take a human team months to develop. After three months, it's unrecognizable from where it started.

This is the actual competitive advantage of an AI automation agency. Not the tools — anyone can access the same APIs. Not the prompts — prompt engineering is the most commoditized skill in tech. The operating system. The measurement discipline. The memory architecture. The proof trail.

That's what Supertrained builds. Not agents in isolation — marketing systems that run them. From autonomous content pipelines to meaning engine optimization, the operating system is the product.

Building an AI automation agency? The operating system is more important than the agents. Talk to us about building yours →

Have a similar challenge?

Describe your bottleneck and get a free Automation Blueprint in 60 seconds.